Intro

Bloom filter is a probabilistic data structure for searching element in a data set.It is similar to HashSet, similarly it tells us whether the set contains certain element or not. Difference is the output of contains(element)=TRUE is futuristic.

In our example we set futuristic value to 0.01, which means the answer "It contains" is 99% correct.

Read more about Bloom filter from here: https://en.wikipedia.org/wiki/Bloom_filter

Scenario

We create two Arrays of random elements. Elements count in each array is 1,000,000.Then we insert the first array into BloomFilter, and we iterate the first array and check if the item contains in BloomFilter. Second array is used only for checking non-existing elements.

We do the same for HashSet as described above.

Benchmarking code

We used customized version of Bloom filter which can accept byte array.(Previous version of this blog was using encoding of string for every put and contains, which was misguiding the performance of bloom filter)

source code:

(Source code is not organized for compilation, please modify it for your use)

Performance output:

Output

Testing BloomFilter 1000000 elements

add(): 0.176s, 5681818.181818183 elements/s

contains(), existing: 0.171s, 5847953.216374269 elements/s

Testing HashSet 1000000 elements

add(): 0.181s, 5524861.878453039 elements/s

contains(), existing: 0.08s, 1.25E7 elements/s

Memory size:

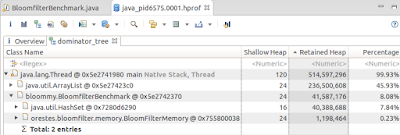

BloomFilter is the winner here. With 99% correctness the memory footprint is almost 40 times smaller than HashSet.

If we reduce correctness to 90%, then the memory footprint is reduced to 80 times.

Conclusion

We saw that BloomFilter as fast as HashMap. However, it is very space efficient.If we have a list of URLs in HashMap in Memory, By using BloomFilter we can reduce it to 40 times. For example, if occupied memory is 500 Mb it can be reduced to 12 Mb with correctness of 99%

Comments

Post a Comment